Return utl_raw.cast_to_varchar2(utl_encode.base64_decode(utl_raw.cast_to_raw(t))) Upd: after a minor adjustment I came up with this, so it works both ways now: function from_base64(t in varchar2) return varchar2 is

Return utl_raw.cast_to_varchar2(utl_encode.base64_encode(utl_raw.cast_to_raw(t))) function to_base64(t in varchar2) return varchar2 is I felt this is very useful with highly skewed data – you are not really playing with the whole data, but only on required data.I've implemented this to send Cyrillic e-mails through my MS Exchange server. Now your baby index is functional with just subset of data. SQL> select * from indextest where decode(COUNTRY,’INDIA’,NULL,’USA’,’USA’,’JAPAN’,’JAPAN’)=’JAPAN’ So, you need to use DECODE while refering the COUNTRY column in the WHERE clause – in a single word this is a function based index. Now your baby index is ready for use.īut the optimizer will not detect your idex directly as you used DECODE function while creating the index. I just put a DECODE function to make NULL where it found a COUNTRY is INDIA and the size of the index is just 0.06 MB fit into a single small extent – index for 48 rows while your table contains 459327 rows. SQL> create index i_country on indextest(decode(COUNTRY,’INDIA’,NULL,’USA’,’USA’,’JAPAN’,’JAPAN’)) Now let us create an index excluding for the nationalities ‘INDIA’. So, we know the size of the inedex in 9MB, just because the index is filled with 99% unwanted entries.

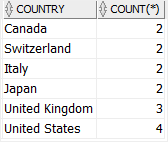

SQL> select bytes/1024/1024,extents from dba_segments where segment_name=’I_COUNTRY’ The sizse of the index is 9MB with 24 extents. |* 1 | TABLE ACCESS FULL| INDEXTEST | 419K| 43M| 691 (3)| 00:00:09 |ġ8312042 bytes sent via SQL*Net to clientģ37322 bytes received via SQL*Net from clientĪs expected, index is picking when you are refering JAPAN and INDIA is going for a FTS. SQL> select * from indextest where COUNTRY=’INDIA’ – dynamic sampling used for this statementĥ35 bytes received via SQL*Net from client Predicate Information (identified by operation id): | Id | Operation | Name | Rows | Bytes | Cost (%CPU)| Time | SQL> select * from indextest where COUNTRY=’JAPAN’ SQL> create index i_country on indextest(COUNTRY) Still let me create the standard index – just to capture the behaviour and size of the index. And being a very big index, you need to manage so many other factors like – maintenance, buffer cache usage, fragmentation and the impacts on other DML activities. My current data is highly skewed and creating an index for all data is really a waste. SQL> update indextest set COUNTRY=’USA’ where rownum update indextest set COUNTRY=’JAPAN’ where rownum commit Updated the table with COUNTRY as USA and JAPAN just for 24 for employees each. Now I have 459327 employees in my test table with default nationality as INDIA. I have created a table INDEXTEST from DBA_OBJECTS with 459327 rows and added a new column COUNTRY. SQL> update indextest set COUNTRY=’INDIA’ SQL> alter table indextest add (country varchar2(20)) Really they need to exist in the index? Can I avoid the 98% of the INDIANS so that the index can be very small – just 2% of the data size? Interestingly this index is not going to be useful to access 98% of the ‘INDIAN’ nationalities, but they need to exist in the index. This is highly skewed data and you want to create an index for this nationality column. For example, you got a employee table, where nationality as Say 98% of the data is same value and remaining 2% may be different values. Think about you need to create an index on a huge table with skewed data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed